Concept

The NextPerception project intends to make a leap beyond the current state of the art of sensing and to achieve a higher level of services based on information obtained observing people and their environment. We envision that this leap entails and comprises paradigm shifts at the following three conceptual levels of sensor system technology development:

- Smart Perception Sensors – Advanced Radar, LiDAR and Time of Flight (ToF) sensors form the eyes and ears of the system. They will be specifically enhanced in this project to observe human behaviour and health parameters and will be combined with complementary sensors to provide all the information needed in the use cases. Their smart features enable easy integration as part of a distributed intelligent system.

- Distributed Intelligence – this emerging paradigm allows for the distribution of the analytics and decision making processes across the system to optimise efficiency, performance and reliability of the system. We will develop smart sensors with embedded intelligence and facilities for communicating and synchronising with other sensors and provide the most important missing toolsets, i.e., those for programming networks of embedded systems, for explainable AI and for distributed decision making.

- Proactive Behaviour and Physiological Monitoring – smart sensors and distributed intelligence will be applied to understand human behaviour and provide desired predictive and supportive functionality in the contexts of interest, while preserving users’ privacy.

Objectives

NextPerception aims at the exploitation of enhanced sensing and sensor networks focusing on the application domains of Health, Wellbeing and Automotive. The starting point is to acquire quality information from sensors nodes (O1). The next step is to transform the data provided by collections of networked sensors into information suitable for proactive decision making (covering a.o. clinical decision making, wellbeing coaching and vehicle control decisions (O2). The provision of a reference platform to support the design, implementation, and management of distributed sensing and intelligence solutions (O3) greatly facilitates the use and implementation of distributed sensor networks, enabling their practical application in the primary domains of healthcare, wellbeing, and automotive as well as in cross-sector applications. The demonstration of these applications (O4) is key to achieve potential products and services that can finally contribute to the solution of the Global Challenges in Health and Wellbeing, and Transport and Smart Mobility. The picture below states these four project objectives.

Use cases

The project features three use cases in the health and automotive domains, which allow the partners to focus on concrete real-life situations where the project innovations will provide clear benefits. These use cases are Integral vitality monitoring, driver monitoring and Safety and comfort at intersections.

Integral Vitality monitoring

This use case focusses on measuring and monitoring of health parameters, behavior and daily activities of persons which might require increased attention or care from a para-medical perspective (e.g. decreased functional capabilities due to age, frailty or increased risk for developing mild cognitive impairment, people involved in sport activities).

Driver monitoring

In this use case, we will develop a Driver Monitoring System (DMS), which can classify both the driver’s cognitive states (distraction, fatigue, workload, drowsiness) and the driver’s emotional (anxiety, panic, anger) state and intention (turn left or right), as well as the activities and position of occupants (including driver) inside the vehicle cockpit. This information will be used for automonous driving functions, including take-over-request and driver support.

Safety and comfort at intersections

Here we intend to provide safety and comfort for all road users – including Vulnerable Road Users (VRUs) like pedestrians and bikers – at a road intersection. The use case will demonstrate the capability to detect the presence of traffic participants, determine their positions and track their motion and intent with high reliability. Specifically, for VRUs this concerns body gestures analysis to derive their intent.

Project organisation

The project is organised in six work packages. WP1 sets the scene by studying the current state of the art (SotA) of technologies and ecosystems, and deriving requirements from the SotA and a set of NextPerception use cases. WP2 and 3 subsequently cover the development of single and multiple sensing hardware, firmware, processing and analytics components for the NextPerception concept. WP3 will further help to define and implement the distributed intelligence paradigm and supporting tools. These technologies will be integrated in WP4, where the demonstrators are defined and integrated, as well as evaluated and validated in pilots in accordance to the approach. Result dissemination and exploitation will take place coordinated by WP5, while the whole project is led and supported by the management tasks in WP6.

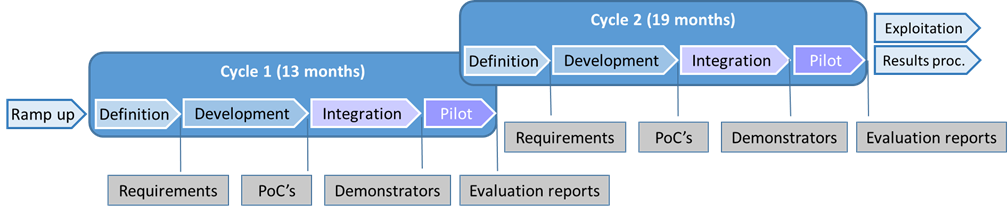

The project will be performed in two cycles and last for three years. The starting date of the project is May 1st 2020, and it will end on April 30th 2023.

The project is funded partially by the European Commission and by national funding agencies. It resorts under the ECSEL joint undertaking.